Nettsider med emneord «Parallel programming»

The Message Passing Interface (MPI) is an inter-process communication interface. MPI enables data exchange between different processes through a strictly standardized API, and for this reason, MPI is regarded as the de facto parallel programming model for supercomputers. Recently, the latest standard, MPI-3, has arrived.

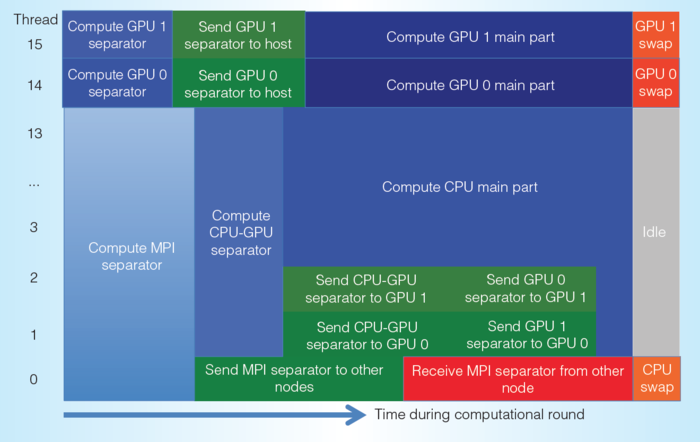

The overall objective of this master project is to to investigate the necessity and potential performance benefits of overlapping communication with computation, in the era of heterogeneous parallel computing.

OpenMP is a well-established standard for programming shared-memory parallel computers. Since 2013, the latest version of OpenMP (4.0 or later) has included the possiblity of utilizing heterogeneous computing systems that are made up of multicore CPUs and accelerators (such as GPUs and many-integratred-core coprocessors). We want to examine how typical numerical algorithms should be re-implemented using OpenMP-4, with the aim of using heterogeneous computing systems.